AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

Entropy equation12/11/2023  That said, the expression for the entropy you deduced is for $V$ constant. To calculate entropy changes for a chemical reaction We have seen that the energy given off (or absorbed) by a reaction, and monitored by noting the change in temperature of the surroundings, can be used to determine the enthalpy of a reaction (e.g.

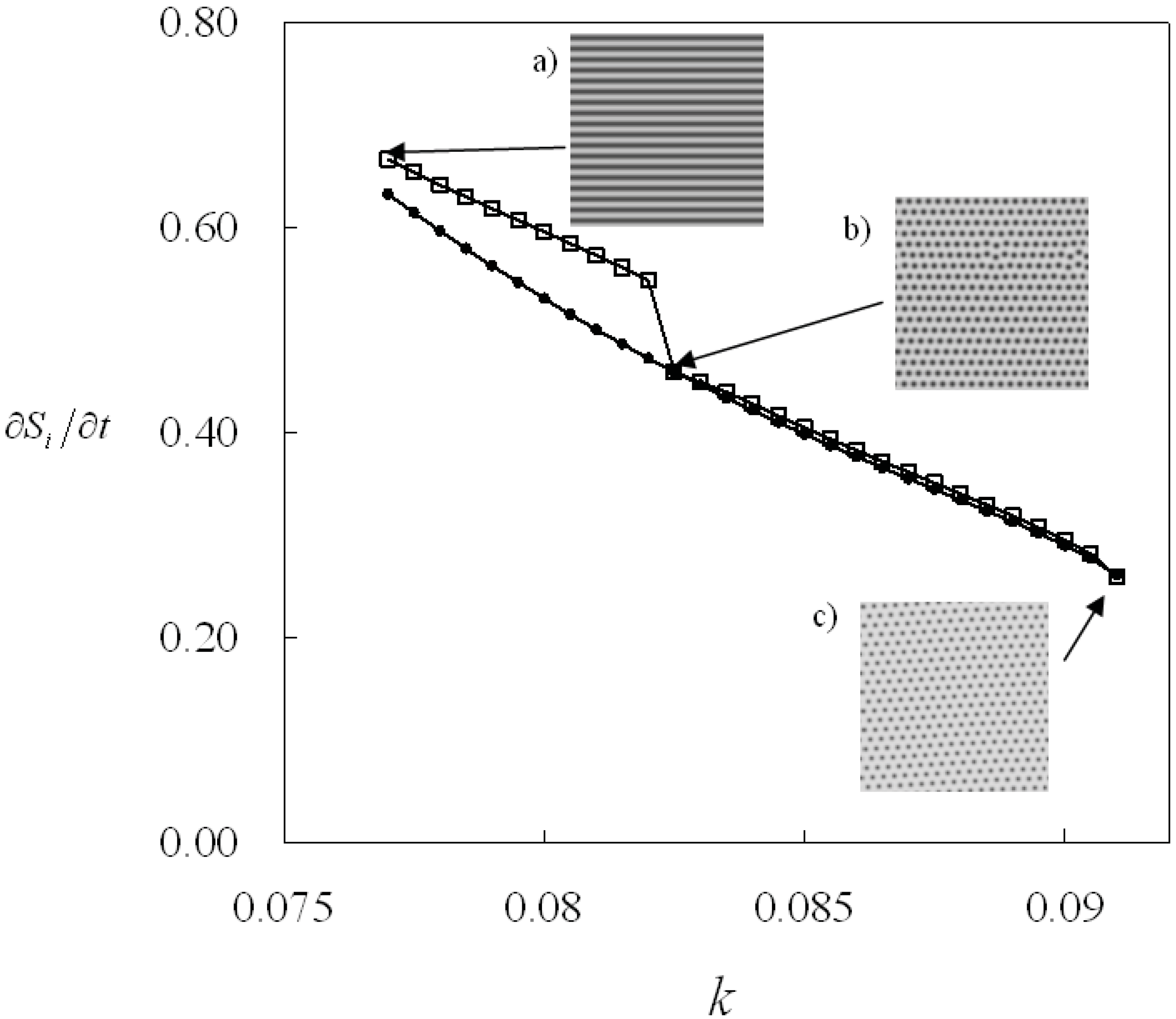

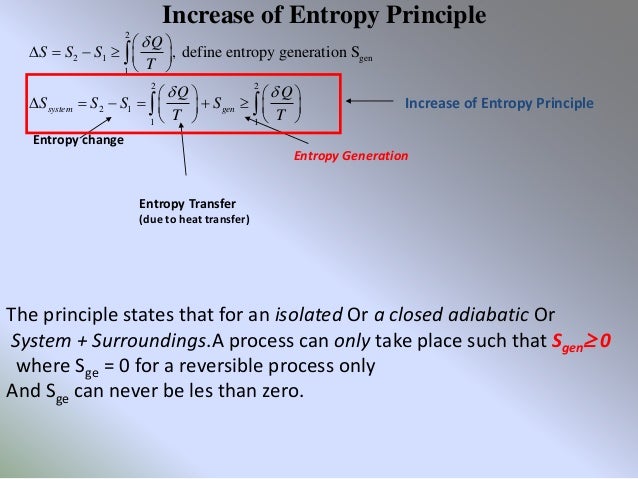

My main concern is PDE and how various notions involving entropy have influenced our understanding of. Therefore if you take as ''system variables'' $T$ and $V$, your function $S(T,V)$ will be the only thing you will need. For more information on the entropy formula and the effect of entropy on the spontaneity of a process, download BYJU’S The Learning App. mathematics course on partial differential equations. show such entropy formula and derive some applications of the new entropy formula. Since is a natural number (1,2,3.), entropy is either zero or positive ( ln 1 0, ln 0 ).

First is the presence of the symbol log s. 2Freeexpansion Anexamplethathelpselucidatethedi erentde nitionsofentropyisthefreeexpansionofagas fromavolumeV 1toavolumeV 2. The (Shannon) entropy of a variable is defined as bits, where is the probability that is in the state, and is defined as 0 if. In mathematics, a more abstract definition is used. (For a review of logs, see logarithm.) There are several things worth noting about this equation. The second law of thermodynamics is a physical law based on universal experience concerning heat and energy interconversions.One simple statement of the law is that heat always moves from hotter objects to colder objects (or 'downhill'), unless energy in some form is supplied to reverse the direction of heat flow. According to the Boltzmann equation, entropy is a measure of the number of microstates available to a system. Information Theory Entropy In physics, the word entropy has important physical implications as the amount of 'disorder' of a system. But what does this formula mean For anyone who wants to be fluent in Machine Learning, understanding Shannons entropy is crucial. That means that it doesn't matter which ''path'' in the phase diagram you take (even if there is no ''path'' when it's irreversible): it only depends on the initial and final states. This is the quantity that he called entropy, and it is represented by H in the following formula: H p 1 log s (1/p 1) + p 2 log s (1/p 2) + + p k log s (1/p k).

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed